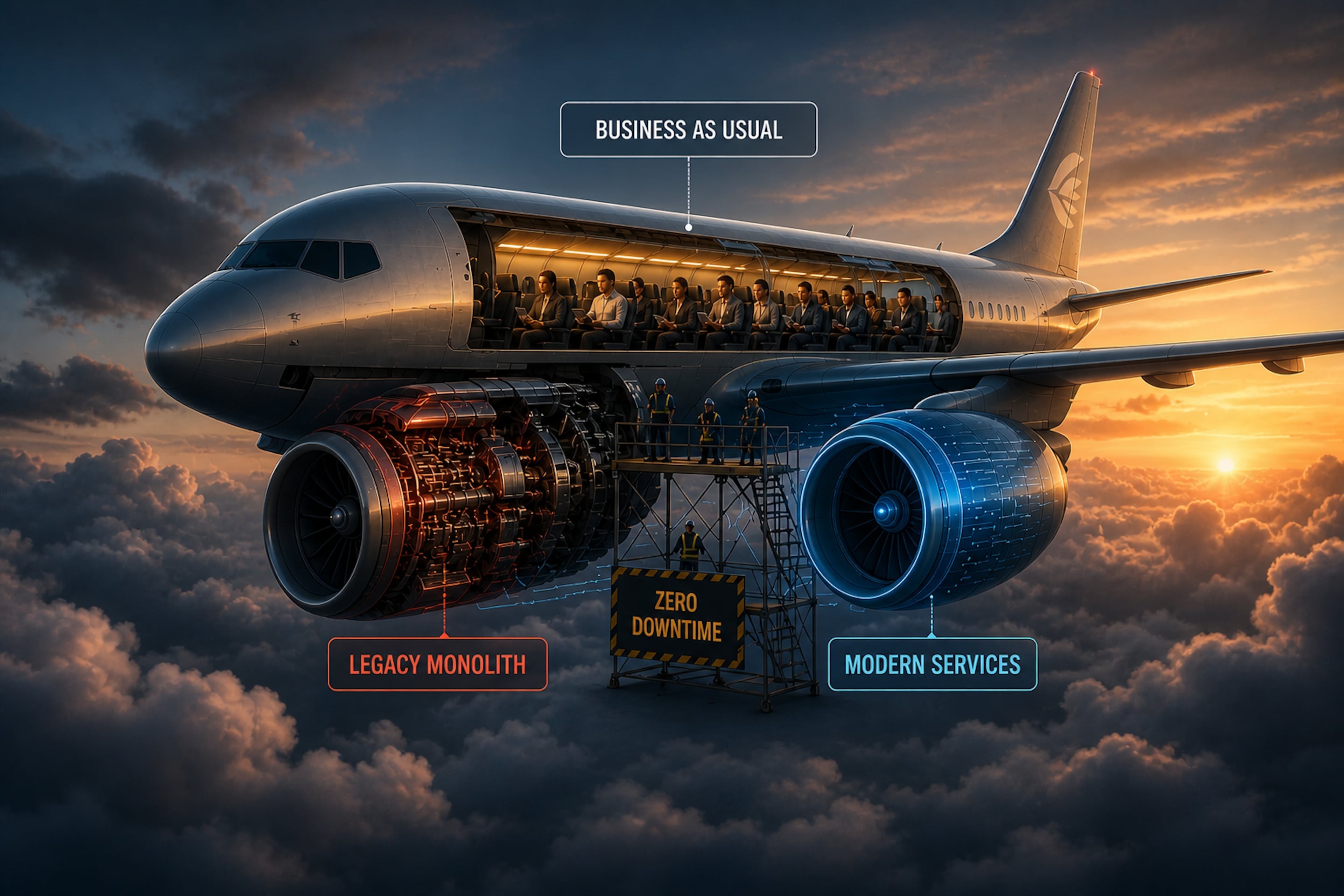

Change the Engine Mid-Flight: The Strangler Fig Pattern in Production

Having lived through big-bang migrations many times — I have yet to meet one that wasn’t underestimated. Not in scope — engineers scope migrations reasonably well. What gets underestimated is the operational reality of running two systems simultaneously while the business continues at full speed, processing transactions, filing regulatory reports, and serving customers who have no idea a rebuild is happening underneath them.

When people say “hollowing out” a monolith, they imagine moving folders. In practice, you are surgically extracting logic while maintaining referential integrity and transactional consistency across systems that don’t share a runtime. Miss a step, and you don’t get a bug. You get a distributed state nightmare — the kind that wakes you up at 3 AM with a pager and no clean rollback path.

This is what the Strangler Fig pattern actually looks like in production. And even after doing it multiple times, I have yet to see one that felt smooth as air — but here are my honest thoughts on how to get as close as possible.

The Traffic Layer: Intelligence at the Edge

The first instinct is to let application code handle routing between the old and new system. Resist it. Routing decisions belong at the infrastructure layer — an Ingress Controller or a Service Mesh like Envoy or Istio.

The technique is header-based routing. Not URL routing — anyone can point /api/payments at a new service. Metadata routing. You direct beta users, internal employees, or specific tenant IDs to the new stack while 99% of traffic stays on the legacy core. This gives you canary deployments scoped to real business risk, not random traffic percentages, but the specific customer segments where the cost of failure is lowest.

The practical benefit: your first users on the new system are your own engineers. You find the 3 AM edge cases before customers do.

The Distributed Data Problem: One Master, One Truth

The biggest technical hazard in any decomposition is the Distributed Data Problem. If both the monolith and the new service can write to the same logical record simultaneously, you will face race conditions that no amount of database locking will reliably solve.

The principle is absolute: during the hollowing process, only one system is the Master of Record for any given bounded context. No exceptions.

The implementation uses Change Data Capture. You attach Debezium to the legacy SQL transaction log — or, as we did at MatahariMall, parse the bin.log through a Go service acting as CDC — and every INSERT and UPDATE is streamed to a Kafka topic. The new service consumes this stream to build its own optimized read model, using whatever storage best fits its access patterns. This is Polyglot Persistence done with purpose: not polyglot for the sake of it, but because the new service’s read profile genuinely differs from the old one.

The flip is the delicate moment. When you move write authority to the new service, the CDC must reverse direction. The new service writes to its own store, and an event streams back to keep the legacy database in sync — because parts of the monolith that haven’t been extracted yet still depend on it. Two systems, one coherent truth.

The Local Join Problem in Transitional Architectures

At some point during the migration, you will have a piece of logic that needs data split across two systems — half in the new service’s Postgres, half still in the monolith’s SQL Server.

The wrong answer is to let the monolith query the new database directly. That creates tight coupling that makes the migration permanent rather than transitional. You’re not extracting the service — you’re just relocating the dependency.

The right answer is a communication layer on top of it: the monolith calls an internal API on the new service. The new service owns the data; the monolith asks for it politely.

The cost is real. You’ve introduced a network hop where there was previously a local join. In high-concurrency environments — and at MatahariMall we felt this acutely — that hop degrades your p99 latency in ways that compound under load. We mitigate with request collapsing and sidecar caching, but don’t pretend the cost disappears. It doesn’t. It becomes manageable. That’s different.

Proving Equivalence: Shadow Before You Flip

How do you prove the new service is behaviorally identical to the old code? Unit tests are necessary but not sufficient. The only proof that matters is production reality.

The technique is traffic shadowing with a diff-logger.

Incoming requests hit the proxy and branch. The legacy path processes normally and returns its response to the client. The shadow path processes in fire-and-forget mode — its response is buffered but never returned. A background worker pulls both responses, runs a deep diff on the JSON, and logs every divergence.

What you discover will humble you. The monolith returns null where the new service returns an empty string. Timestamps differ in format — 2024-04-03T14:50:29Z on one side, 1712151029 on the other. Decimal precision differs. Field ordering differs in ways that matter to downstream consumers you didn’t know existed.

You iterate until the diff-logger produces only noise — expected, understood variance — and no signal. Only then do you flip write authority. Not before.

The Kill Switch: Keep the Monolith Warm

In banking, a 500 error is not a degraded experience. It is a compliance event and a trust erosion. The fallback architecture must be automatic, not dependent on a human being awake and paying attention.

Circuit breaker logic: if the new service exceeds a latency threshold — 300 milliseconds at p95 is our line — or an error rate above 0.5%, the ingress proxy stops routing traffic there immediately and redirects 100% back to the legacy monolith. No message, no approval, no runbook. It just happens.

The technical humility required here is significant. The monolith is your Safe Mode. You keep it warm. You keep it patched. You keep its capacity scaled. You do not decommission it until the new service has survived a genuine peak load event — a payday salary cycle, a regulatory processing window, a product campaign that sends traffic three times above baseline — without triggering a single automatic fallback.

Engineers hate maintaining two systems. Resist the pressure to shut down the old one early. The monolith earned its uptime over years of production hardening. Respect that.

Architecture as Surgery

Hollowing out a core system is not a cleanup task or a technical debt sprint. It is distributed systems engineering at its most demanding — requiring a deep respect for state, a healthy cynicism about network reliability, and the humility to accept that your clean new code may not be as resilient as the ugly legacy code that has survived a decade of production reality.

The measure of success is not reaching the finish line.

It’s ensuring that during the entire journey — the shadow diffs, the gradual flips, the CDC reversals, the circuit breaks at 2 AM — the system was never down for a single second.

That’s the engine change. Mid-flight. Without the passengers noticing.

The rest is firefighting. Rollback plans you hope never to use but spend weeks hardening. Runbooks written at midnight for incidents that don’t exist yet. The on-call rotation that quietly expands the week of every flip. Nobody talks about that part — the unglamorous readiness that sits behind every clean migration story.

No smooth-as-silk, cool-as-air moment. Not once. What you get instead is a controlled tension: the quiet confidence that when things go sideways — and they will — the mechanisms are already in place to contain it. That’s the closest to graceful this work ever gets.